|

Hello! I'm a Postdoc at MIT with Prof. Tomaso Poggio.

I work on Machine Learning Theory.

|

|

Recently, I have been exploring the fundamental limits of agentic systems and the mathematical theory one can develop around them.

For instance, with the project pAI I want to understand to what extent agentic systems can automate high-quality research and, if so, identify, approach, and possibly attain this upper bound to automation.

In parallel, I continue to work on the theoretical foundations of machine learning, particularly on deep neural networks and their optimization, as I did during my PhD. In this direction, I'm interested in (1) where optimization algorithms converge on general landscapes, (2) how hyperparameters affect that, and (3) whether we can link this to changes in the performance of the resulting models. Selected talks |

|

This work is collected in a more accessible and continuously updated form at pAI. |

|

Boosting

Weak reasoning models

|

Agentic Systems as Boosting Weak Reasoning Models.

|

|

Code

Distribution-aware solvers

|

Distribution-Aware Algorithm Design with LLM Agents.

|

|

pAI/MSc

Multi-agent research workflow

|

pAI/MSc: ML Theory Research with Humans on the Loop.

|

|

Survey

Academic research automation

|

Agent Systems for Academic Research Automation.

|

|

|

|

Does Weight Decay Enhance Training Stability?.

|

|

Too Sharp, Too Sure: When Calibration Follows Curvature.

|

|

Momentum Further Constrains Sharpness at the Edge of Stochastic Stability.

|

|

Same Error, Different Function: The Optimizer as an Implicit Prior in Financial Time Series.

|

|

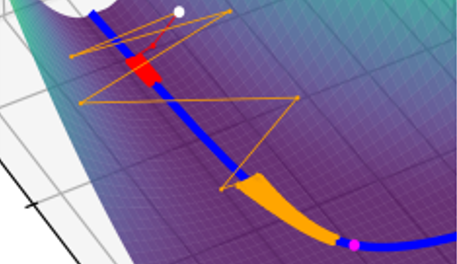

Edge of Stochastic Stability: Revisiting the Edge of Stability for SGD.

|

|

Does SGD Seek Flatness or Sharpness? An Exactly Solvable Model.

|

|

Gradient Descent Converges Linearly to Flatter Minima than Gradient Flow in Shallow Linear Networks.

|

|

How Neural Networks Learn the Support is an Implicit Regularization Effect of SGD.

|

|

On the Trajectories of SGD Without Replacement.

|

|

|

|

Uncertainty

Complexity can hurt confidence

|

Models with higher effective dimensions tend to produce more uncertain estimates.

|

|

Deep neural network approximation theory for high-dimensional functions.

|

|

High-dimensional approximation spaces of artificial neural networks and applications to partial differential equations.

|

|

At MIT, I am also helping organize the Poggio Lab seminar series AI: Foundations -- for Academia (and Startups).

Sometime in my PhD I got interested in the ethical aspects of my job. I contributed to the organization of multiple events to raise the awareness of the community around the responsibilities of modelers and statisticians for the high-stakes decisions and policies that are based on their work. See CEST-UCL Seminar series on responsible modelling and check out our conference. I was in the committee of the Princeton AI Club (PAIC), where we hosted a lot of exciting talks featuring Yoshua Bengio, Max Welling, Chelsea Finn, Tamara Broderick, etc. I spoke about the advent of AI and its impact on society in Italian public radio, at Zapping on Rai Radio 1, etc. |

|

Last modified on March 21, 2026. |

Template credits to Jon Barron! |